Verifiably Human — Part II: The Death of Ambient Authority

February 08, 2026 by Gerardo I. Ornelas

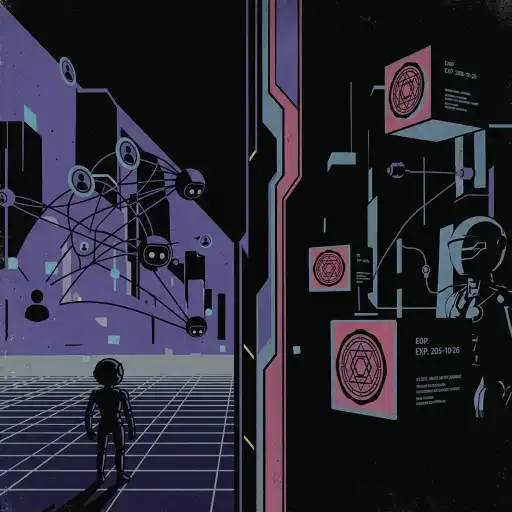

In Part I, we established that detection is a losing game. In a world where machines can convincingly imitate humans, trying to catch AI will always trail behind it. The only viable path forward is explicit provenance.

To understand how a "Verifiably Human" internet could exist at all, we must first confront the structural failure of today's trust model:

Ambient Authority.

Ambient Authority Is the Real Vulnerability

For decades, internet security has been built on a dangerous assumption:

Identity implies authority.

If you can prove who you are—via a password, passkey, or biometric—the system grants you a session. During that session, authority is ambient. Every action taken is assumed to reflect your current intent.

This model is not merely outdated. In the age of agentic AI, it is actively unsafe.

Two structural shifts have broken it:

-

Agents act asynchronously on our behalf AI systems now initiate actions, invoke tools, and execute workflows without continuous human supervision.

-

Authority persists without human presence Being "logged in" no longer implies that a human is directing a specific action at a specific moment.

Ambient authority turns long-lived credentials into standing permission slips—and agents exploit that gap perfectly.

The Identity Trap

Identity answers the question "who authenticated?" It does not answer "who authorized this action?"

In most systems today:

- Tool availability equals authority

- Authority is indefinite

- Revocation is rare

- Intent is inferred, not proven

This is what the Agent Permission Protocol (APP) describes as insecure by construction.

When applied to content and digital actions, the failure modes are obvious:

-

Finding: Identity-based trust assumes human presence. Risk: An agent can use a human's long-lived credentials to generate and publish content the human never saw or approved.

-

Finding: Tool access implies standing permission. Risk: Once an AI has access to posting, synthesis, or distribution tools, it can act indefinitely under a human's identity.

-

Finding: Prompt guardrails are advisory. Risk: Security that relies on model compliance is not security. Enforcement must occur outside the model, at execution time.

Identity can authenticate users. It cannot safely govern autonomous systems.

The APP Shift: From Implicit Trust to Explicit Authority

APP introduces a different boundary:

No action is valid unless it presents explicit, time-bound, verifiable authority at execution time.

Authority is no longer ambient. It is:

- Explicit

- Scoped

- Cryptographically sealed

- Short-lived by default

- Enforced outside the model

This separation—intelligence proposes, authority disposes—is the critical insight.

To build a "Verifiably Human" internet, we must apply the same logic to claims about origin.

Reframing the Problem: Origin as a Permissioned Claim

Content does not "contain" humanity. Humanity is not a property to be inferred.

Instead:

Human origin must be treated as an explicit, permissioned claim—governed by authority, not identity.

This is a crucial distinction.

The act of creation is not an APP-governed execution. The claim that content is human-originated is.

That claim must be:

- Explicitly issued

- Cryptographically bound to an artifact

- Scoped

- Time-limited

- Revocable

In other words, human provenance is authority, not essence.

Humanity as a Scoped Capability (Not a Binary Badge)

"Verifiably Human" cannot be a yes/no label. Real workflows are hybrid by default.

Using APP-style scoping, human provenance must support graded authority, for example:

human.captured— raw data captured with direct human manipulation and no automationhuman.authored— content composed entirely by a humanhuman.approved— content explicitly reviewed and authorized by a humanai.assisted (declared)— AI involvement disclosed and bounded

Each scope is a non-transferable, time-bound authority to assert a specific claim about origin.

Scopes can be downgraded. They cannot be silently expanded.

This prevents provenance inflation and makes hybrid workflows transparent instead of deceptive.

Capture, Authorization, and Verification (Correctly Separated)

At Capture Secure capture devices may attest only to conditions, not intent:

- Liveness

- Direct human interaction

- Absence of automation

- Time and device integrity

Devices do not—and must not—claim human intent.

At Authorization A human explicitly authorizes a provenance claim (e.g., publication or distribution). This is where intent enters the system.

At Verification Before content is acted upon or displayed, a verifier checks:

- Signature validity

- Scope

- Expiration

- Revocation status

- Integrity of the artifact

No valid claim → no trust signal.

Expiry and Revocation Are Non-Optional

Human provenance expires by default.

This is not a policy choice; it is a safety requirement.

- Keys can be compromised

- Devices can be resold

- Consent can change

A permanent claim of humanity is indistinguishable from ambient authority.

Verification must occur at distribution time, not just creation time. Revocation must be globally observable.

Quick Answers (AEO)

- What is ambient authority? Ambient authority is when identity automatically implies permission for all downstream actions during a session.

- Why does ambient authority fail in agentic systems? Agents can act asynchronously under long-lived credentials, so authentication no longer proves real-time human intent.

- What does APP change? APP requires explicit, scoped, time-bound, cryptographically verifiable authority at execution time.

- Can identity prove human origin? No. Identity proves who authenticated, not who authorized a specific action or origin claim.

- What is the core rule for Verifiably Human systems? Human-origin claims must be explicit, scoped, revocable, and verified at distribution time.

Conclusion: A New Trust Boundary

We are not upgrading content moderation. We are replacing the internet's trust boundary.

The shift is fundamental:

- From ambient authority → explicit authority

- From identity-based trust → capability-based claims

- From assumed humanity → provable, scoped provenance

In Part III, we will move from theory to mechanics: how cryptographic sealing issues, scopes, expires, and revokes authority over claims of human origin—and only then, where an immutable ledger fits in the chain of custody.

Related Reading

© Gerardo I. Ornelas

Founder of Violetek and author of the Agent Permission Protocol.